A team of researchers based at the University of Waterloo have created a new tool—nicknamed « RAGE »—that reveals where large language models (LLMs) like ChatGPT are getting their information and whether that information .

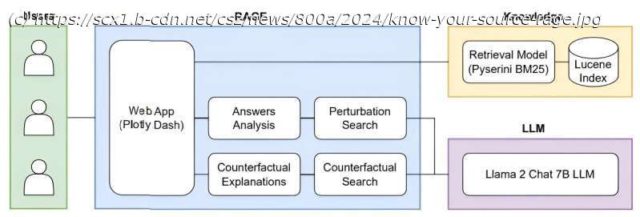

A team of researchers based at the University of Waterloo have created a new tool—nicknamed « RAGE »—that reveals where large language models (LLMs) like ChatGPT are getting their information and whether that information can be trusted.

LLMs like ChatGPT rely on « unsupervised deep learning, » making connections and absorbing information from across the internet in ways that can be difficult for their programmers and users to decipher. Furthermore, LLMs are prone to « hallucination »—that is, they write convincingly about concepts and sources that are either incorrect or nonexistent.